Waterfall 2.0

Waterfall 2.0 - The Quiet Return of the Methodology We Micked

Open any AI-coding repo on GitHub today and count the markdown files. Then count the lines of code. The ratio has been climbing for two years, and in some projects - especially those built around Claude Code, Cursor, or Aider - the specs now rival the implementation in size.

PRDs, architectural decision records, agent.md, claude.md, plans.md, tickets.md, a folder called /specs that nobody would have opened in 2019.

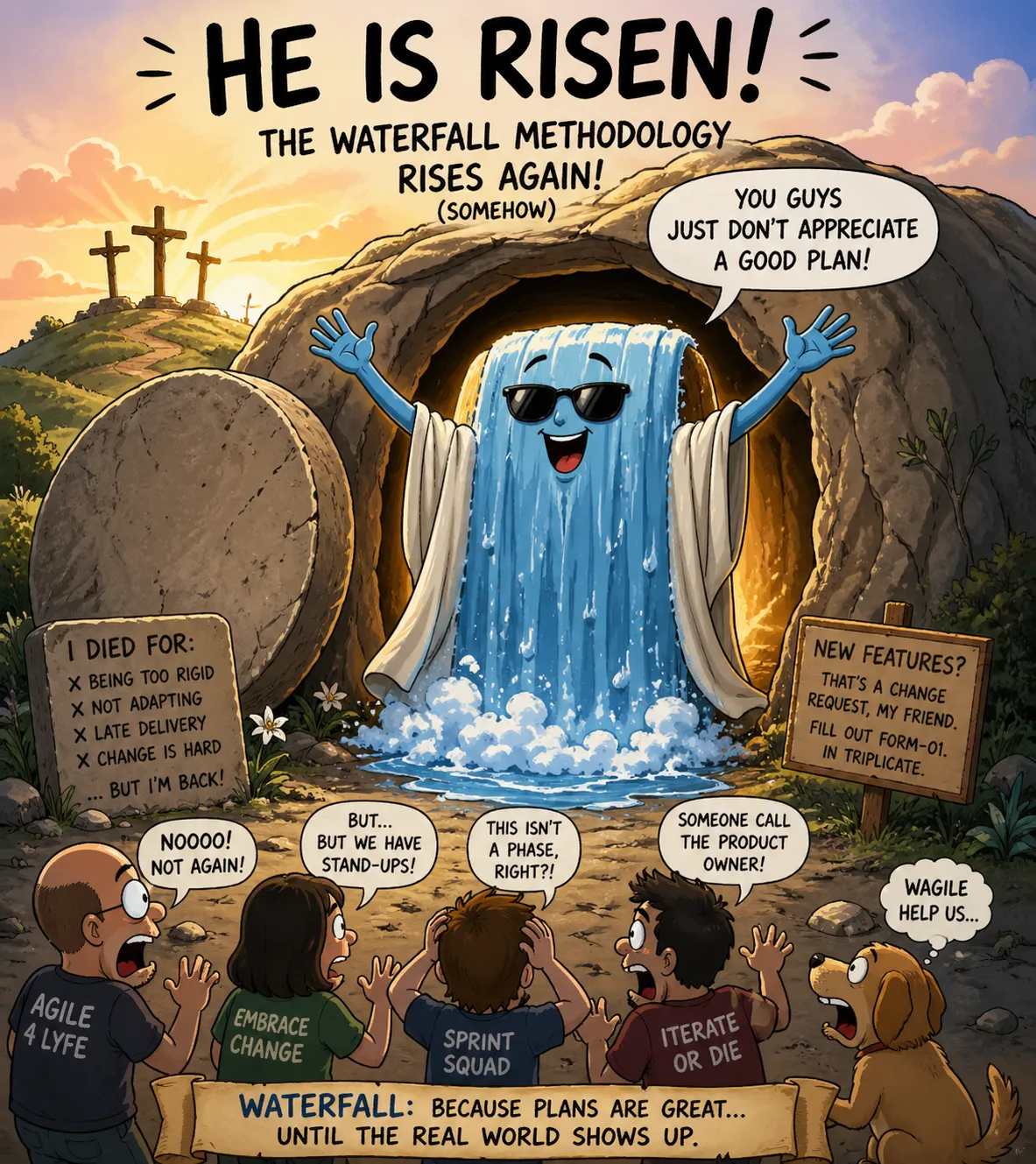

We told ourselves we'd buried waterfall. We wrote a manifesto about it. And yet here we are, writing a four-thousand-word specification before a single function exists.

The Thing We Said We'd Never Do Again

Winston Royce's 1970 paper Managing the Development of Large Software Systems is the foundational document of waterfall - and one of the great misreadings in software history. Royce drew the famous diagram (requirements → design → implementation → testing → operations) on page two and then spent the next eight pages explaining why this approach was, in his words, "risky and invites failure". He proposed iteration. He proposed prototyping. He proposed throwing the first version away. The Department of Defense read page two, ignored the rest, and built thirty years of procurement standards on top of it. DOD-STD-2167, released in 1988, codified the misreading into law.

By the late 1990s the cost was visible everywhere. The Standish Group's 1995 CHAOS Report found that only about sixteen percent of software projects finished on time and on budget. The C3 payroll project at Chrysler - where Kent Beck was working - became a kind of Patient Zero for what came next. In February 2001, seventeen developers met at Snowbird, Utah, and signed the Agile Manifesto. The line that mattered most - working software over comprehensive documentation.

The whole point was that you could not specify your way out of complexity. You had to build your way out, in small increments, getting feedback at each step.

The Ghost of the Past - Specify, Then Generate

Here is the part most people forget. The dream of writing detailed specifications and then generating code is much, much older than modern AI. It is, in fact, one of the most persistent failed dreams in our field.

In the 1980s, CASE tools (Computer-Aided Software Engineering) promised exactly this - draw the data flow diagrams, fill in the entity-relationship models, and the system writes the code. Companies poured billions into IEF, IEW, and Excelerator. By 1995, Gartner was running funeral services for the category.

In the 1990s, UML and the OMG's Model-Driven Architecture revived the dream in new clothes. Rational Rose. Executable UML. "The model is the code." Grady Booch and Ivar Jacobson promised that platform-independent models would render programming languages obsolete within a decade. Twenty-five years later, UML survives mainly as a way to torment undergraduates.

Even further back, Donald Knuth's literate programming (1984) proposed the gorgeous idea that source code should be a document explaining itself to humans, with the executable being almost a side effect. Beautiful, mostly ignored.

The throughline is hard to miss: every generation tries to push the source of truth up, away from code, into something more human-readable. And every generation discovers the same thing - the specification ends up either so vague that the generated code is wrong, or so detailed that it is the code, just expressed in a worse language than the one we already had.

What's Different Now (And What Isn't)

The believers argue this time is different, and they have a real point. Previous attempts at specify-then-generate failed because the generators were brittle - they could only produce CRUD apps, or they emitted unmaintainable spaghetti, or they choked on the long tail of business logic. Large language models handle ambiguity. They interpolate. They can take a vague PRD and produce something plausible.

So maybe the dream finally works.

But notice what is actually happening in the workflow. A developer opens a fresh repo. Before writing code, they write a PROJECT.md describing the architecture. Then a plan.md breaking it into phases. Then per-feature spec files. The agent reads these and produces an initial implementation. The developer reviews, finds the model misunderstood something, and - crucially - goes back and edits the spec, then asks for regeneration.

That is not agile. That is waterfall with the implementation phase compressed from weeks to minutes. The bottleneck has moved, but the shape is identical - heavy upfront specification, a generation phase, then verification. Royce would recognize it instantly. He'd probably also recognize the part where the spec drifts out of sync with the code by week three.

What Brooks Would Say

Frederick Brooks, in his 1986 essay No Silver Bullet, made a distinction we should drag back into the daylight - essential complexity (the inherent difficulty of the problem) versus accidental complexity (the difficulty of the tools, languages, and ceremony around solving it).

Brooks argued no tool would ever produce a tenfold improvement in productivity, because tools only address accidental complexity, and most accidental complexity had already been wrung out. The hard part - figuring out what the software should do - is essential, and no tool helps you with that.

The optimistic read of Waterfall 2.0 is that AI is finally doing what Brooks said no silver bullet ever could - collapsing accidental complexity to near zero, leaving humans to focus on essential complexity, which is exactly what specs are for. The pessimistic read is that we have discovered a new kind of accidental complexity - prompt engineering, spec maintenance, context window management, agent orchestration, evaluation harnesses - and we are calling it progress because the new ceremony feels more sophisticated than the old. The number of meta-files in a modern AI-assisted repo would have made a 1990s Rational consultant weep with joy.

The Agile Critique Still Applies

The deepest insight of agile was not "iterate fast". It was epistemological - you do not know what you want until you see it working. Requirements are not discovered through introspection - they emerge through contact with reality. The user changes their mind when they see the prototype. The edge case appears when real data hits the function. The architecture's flaw is invisible until you are three features deep.

If that is true - and forty years of evidence suggest it is - then no amount of AI-assisted generation rescues a process where humans write a long specification before any code runs. You will still discover, in week three, that the spec was wrong in ways you could not have anticipated. The cost of being wrong is lower now (regenerate instead of refactor), but the epistemic problem is unchanged. You are still trying to think your way to a correct specification before reality has had its say.

This is why the spec-heavy workflow feels productive but often isn't. Writing the spec triggers the same illusion of completeness that big-design-up-front always did - you have produced a coherent artifact, it reads well, it covers the cases you can imagine. The cases you cannot imagine remain, as ever, exactly where they were - waiting in production.

The Question Worth Sitting With

Is the rising specs-to-code ratio a sign that we have finally found the right level of abstraction - where humans describe intent and machines handle implementation? Or is it a sign that we have forgotten what agile actually taught us, distracted by the seductive feeling of producing thousands of lines of "code" by writing a few hundred lines of English?

The honest answer is probably - both, depending on the project. For well-understood domains with stable requirements - internal tools, glue code, standard CRUD, the work that bored generations of consultants - Waterfall 2.0 is genuinely fine, perhaps even optimal. The territory has been mapped a thousand times; the spec really can capture it. For genuinely novel software, where you are discovering the problem as you build it, no amount of upfront specification will save you, no matter how good the generator. The map is the wrong size.

The methodology wars of the 2000s ended with a kind of synthesis - agile for new things, more structured approaches for known things. We may be heading for a similar truce. But before we declare victory, it is worth remembering that every previous "this time we'll specify our way out of complexity" movement ended in tears, and the people who saw it coming were always reading Royce, Brooks, and the Agile Manifesto.

The history of software is the history of forgetting what we just learned. The specs are getting longer. The folders are filling up. Somewhere, Royce is shaking his head at page two.